🔄🔄 MLOps Full Recap

Dive in!

This week we're finishing the MLOps series, one of our most popular series so far. Here is a full recap for you to catch up with the topics we covered. As the proverb (and many ML people) says: Repetition is the mother of learning ;)

Let’s have some useful intro about the whole category first:

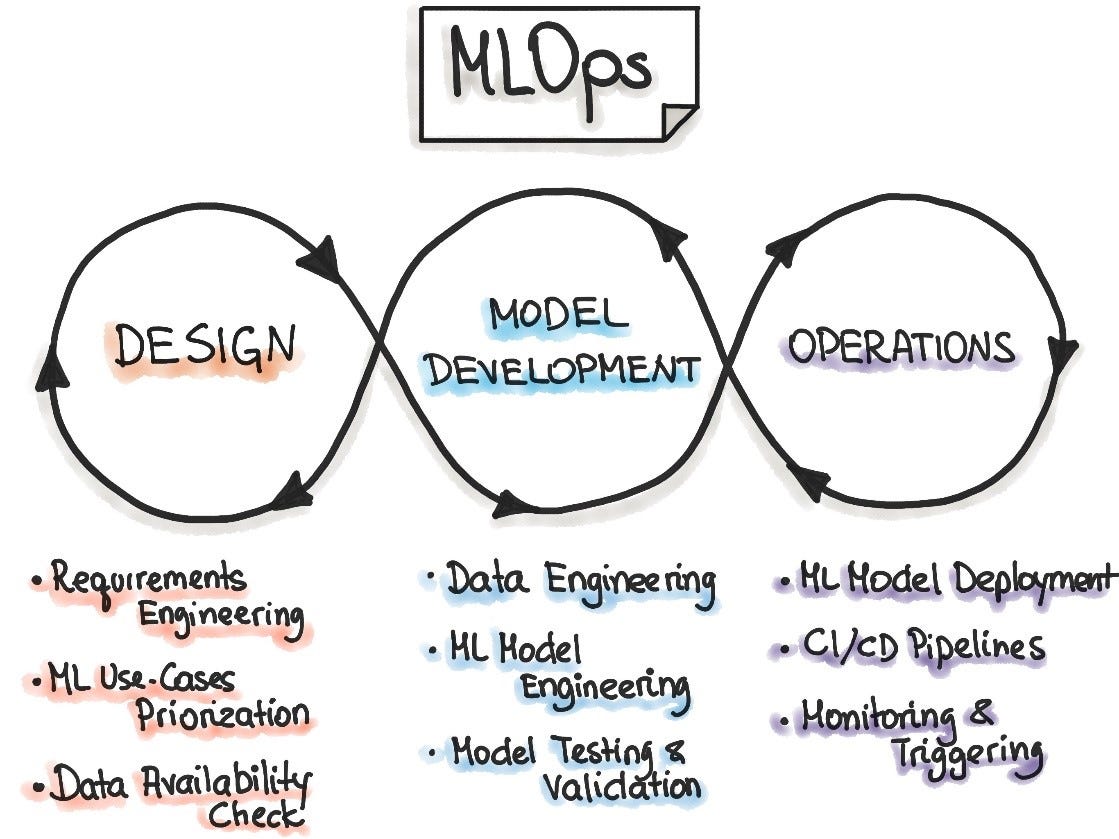

💡 ML Concept of the Day: What is MLOps?

One of the challenges to understanding MLOps is that the term itself is used very loosely in the ML community. In general, we should think about MLOps as an extension of DevOps methodologies but optimized for the lifecycle of ML applications.

Let’s see what else we’ve got for you:

In Edge#139 (you can read it without a subscription), we overview MLOps as a concept; we cover TFX, a TensorFlow-based architecture created by Google to manage machine learning models; +MLflow, a platform for end-to-end machine learning lifecycle management.

In Edge#141, we discuss Model Monitoring; +Google’s research paper about the building blocks of interpretability; +a few ML monitoring platforms: Arize AI, Fiddler, WhyLabs, Neptune AI.

In Edge#143, we offer you the recap of articles dedicated to feature stores. Why did they become a crucial part of the MLOps stack?

In Edge#145 (you can read it without a subscription), we discuss model observability and its difference from model monitoring; +Manifold, an architecture for debugging ML models; +Arize AI that enables the foundation for ML observability.

In Edge#147, we explain what model serving is; +the TensorFlow serving paper; +TorchServe, a super simple serving framework for PyTorch.

In Edge#151 we discuss Model Packaging; +Typed Features at LinkedIn; +ONNX, a key framework for ML Interoperability.

In Edge#153 we explore ML Model Versioning; +how Uber backtests and versions forecasting models at scale; +Lyft’s Amundsen, an open-sourced data discovery and versioning platform for data science workflows.

In Edge#155 we explain A/B Testing for ML Models; +how Meta AI uses ML A/B testing for improving its news feed ranking; +W&B, one of the top ML experimentations platforms on the market.

In Edge#157 we explore CI/CD in ML Solutions; +Amazon’s continual learning architecture that manages the ML models lifecycle; +CML, an open-source library for enabling CI/CD in ML pipelines.

Our next series is absolutely fascinating: generative models, one of the fastest-growing areas of research in ML. Subscribe to receive the full articles. It’s a proven way to learn and be updated about the most important ML development and research.