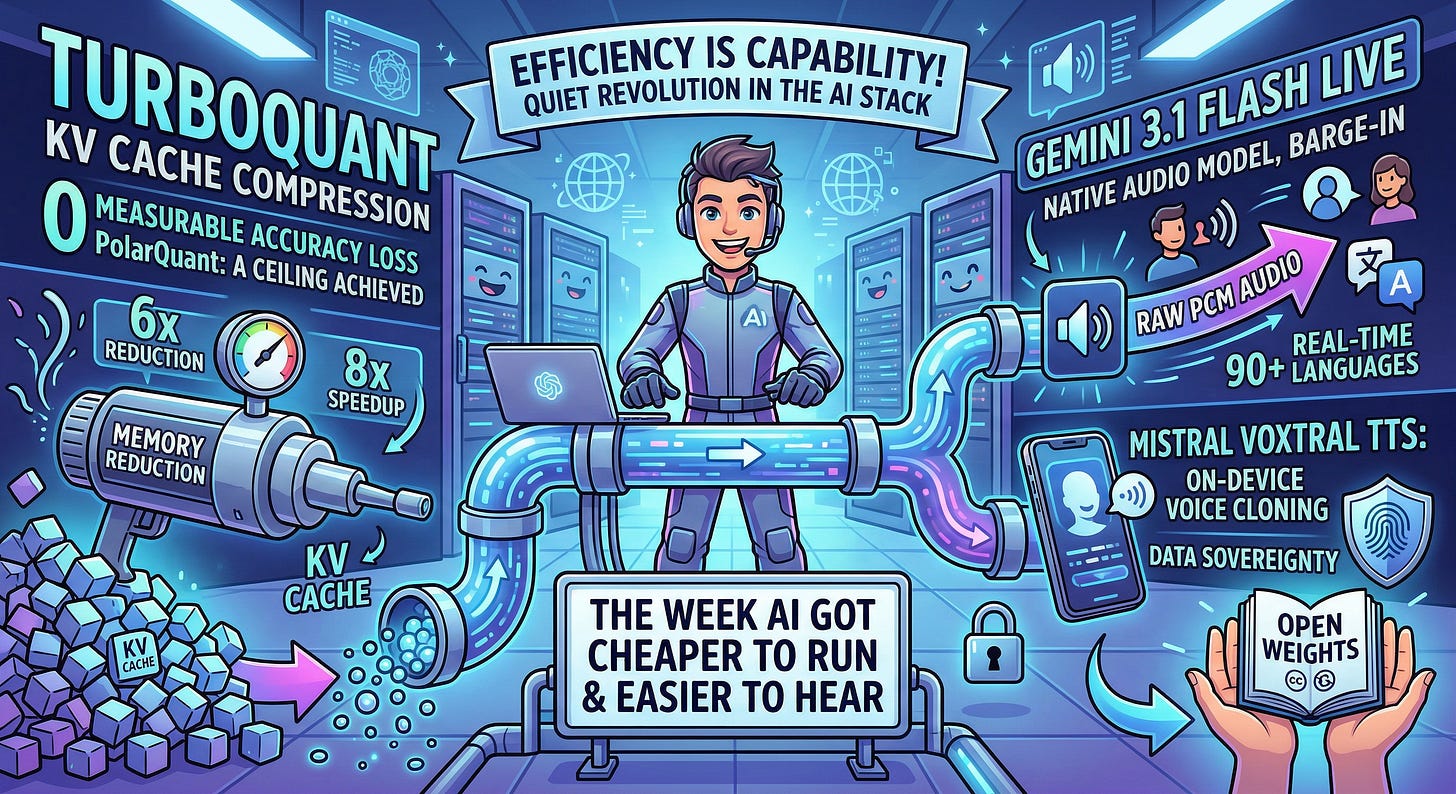

The Sequence Radar #832: Last Week in AI: Compression, Voice, and Why It All Matters

New algorithms and innovative voice models

Next Week in The Sequence:

An unexpected interview with one of the people that kick off the transformer revolution.

Our series about world models continues with a deep dive into the core architecture components of this new family of models.

The AI of the week section will focus on the amazing TurboQuant algorithm.

The opinion section dives into a topic I’ve been thinking a lot about lately, whether AI agents need insurance.

Subscribe and don’t miss out:

📝 Editorial: Compression, Voice, and Why It All Matters

This week in AI was quite pragmatic. Let’s talk about plumbing. Not the glamorous stuff — no new reasoning benchmarks, no AGI proclamations. Just three releases this week that quietly moved the floor on what’s possible, and why that matters more than most people realize.

TurboQuant: The KV Cache Problem, Finally Solved

Here is the dirty secret of LLM inference. As your context window grows, the key-value cache — the scratchpad the model uses to avoid recomputing attention over every prior token — grows with it. Linearly. For long-context workloads, this cache becomes the dominant consumer of GPU memory, and memory is the binding constraint on how many users you can serve per dollar.

Google Research dropped TurboQuant this week, and the numbers are genuinely striking. By converting KV vectors from Cartesian to polar coordinates (PolarQuant), the angular distributions become highly predictable, letting you skip the expensive per-block normalization constants that make traditional quantization schemes eat back their own gains. The second stage, QJL, reduces each vector to a single sign bit using the Johnson-Lindenstrauss transform, adding a precision-balanced estimator to keep attention scores accurate. The result: 3-bit KV cache compression with zero measurable accuracy loss, 6x memory reduction, and up to 8x speedup on H100s. Training-free. Drop-in.

The information-theoretic framing here is important. TurboQuant’s error is approaching the Shannon lower bound — which means we’re not just winning, we’re near the ceiling of what’s achievable through compression alone. This is a statement about where the field is, not just about what Google built. The next gains in long-context inference will have to come from somewhere else: sparse attention, better architectures, or smarter eviction policies.

Voice Week: Two Very Different Bets

Google shipped Gemini 3.1 Flash Live the same week, and it’s the clearest signal yet that the old voice stack — VAD → STT → LLM → TTS, four sequential hops with four latency budgets — is getting replaced. 3.1 Flash Live collapses this into a single native audio model that processes raw PCM bidirectionally, supports barge-in mid-sentence, and reaches over 90 languages in real time. It scored 36.1% on Scale AI’s Audio MultiChallenge — not perfect, but the right benchmark to watch, because it evaluates coherence under interruption, the hardest failure mode of the old pipeline. Search Live is now rolling on this model in 200+ countries. That’s a quiet global deployment of a meaningfully new architecture.

Mistral took the opposite approach and it’s equally interesting. Voxtral TTS is 4B parameters, built on Ministral 3B, runs on a smartphone, voice-clones from under five seconds of audio, and ships with open weights under Creative Commons. Time-to-first-audio is 90ms. The pitch to enterprise isn’t “better voice” — it’s “your voice, on your hardware, never leaving your datacenter.” For regulated industries processing audio, the data sovereignty angle is a genuine moat.

None of this week releases are capability jumps — they’re efficiency jumps. But in a world where inference cost is the binding constraint on where AI can go, efficiency is capability. The stack is getting cheaper at every layer, faster than the models are getting bigger.

That’s the story of this week. Worth paying attention to.

🔎 AI Research

TurboQuant: Online Vector Quantization with Near-optimal Distortion Rate

AI Lab: Google Research, New York University, Google DeepMind

Summary: This paper introduces TURBOQUANT, an online, data-oblivious vector quantization algorithm that achieves near-optimal distortion rates for both mean-squared error (MSE) and inner product estimation. It uses random rotations and optimal scalar quantizers, demonstrating marginal quality degradation at 2.5 bits per channel for KV cache quantization and superior recall in nearest neighbor searches.

HYPERAGENTS

AI Lab: University of British Columbia, Vector Institute, University of Edinburgh, New York University, Meta

Summary: HYPERAGENTS extends the Darwin Gödel Machine framework to enable self-referential AI agents that can iteratively modify and improve their own self-improvement mechanisms across any computable task. By combining task execution and meta-level modification into a single editable program, these agents successfully accelerate progress and improve their own learning architectures in domains ranging from coding to robotics reward design.

Agentic AI and the next intelligence explosion

AI Lab: Google, University of Chicago, Santa Fe Institute, Antikythera, Berggruen Institute, University of California, San Diego

Summary: This paper challenges the idea of a monolithic AI singularity, arguing instead that future transformative intelligence will emerge from complex, socially organized interactions among multitudes of AI agents and humans. The authors emphasize that building scalable, cooperative “agent institutions” and constitutional checks and balances is critical for safely managing the combinatorial explosion of intelligence.

Voxtral TTS

AI Lab: MistralAI

Summary: Voxtral TTS is an expressive, multilingual text-to-speech model that uses a hybrid architecture—combining auto-regressive generation for semantic tokens and flow-matching for acoustic tokens—to clone voices from just 3 seconds of reference audio. Leveraging a custom low-bitrate speech tokenizer and accelerated via vLLM-Omni and CUDA graphs, it achieves highly natural, low-latency streaming inference, ultimately outperforming strong baselines like ElevenLabs Flash v2.5 in human preference evaluations for voice cloning.

A Foundation Model of Vision, Audition, and Language for In-silico neuroscience

AI Lab: FAIR at Meta, Laboratoire de Neurosciences Cognitives et Computationnelles, Ecole Normale Supérieure PSL

Summary: This paper introduces TRIBE v2, a tri-modal foundation model that leverages video, audio, and language inputs to accurately predict high-resolution human brain activity across a wide range of naturalistic and experimental conditions. Trained on over 1,000 hours of fMRI data, the model surpasses traditional linear encoding methods and enables zero-shot in silico experimentation, successfully recovering classic neuroscientific findings and revealing the fine-grained topography of multisensory integration

FinMCP-Bench: Benchmarking LLM Agents for Real-World Financial Tool Use under the Model Context Protocol

AI Lab: Qwen DianJin Team (Alibaba Cloud Computing), YINGMI Wealth Management, and Soochow University

Summary: This paper introduces FinMCP-Bench, a comprehensive benchmark designed to evaluate how well large language models can invoke real-world financial tools using the Model Context Protocol (MCP). Featuring 613 diverse samples that range from single-tool tasks to complex multi-turn interactions, the benchmark reveals that while leading models perform reasonably well, accurately handling complex multi-tool dependencies remains a significant challenge.

LeWorldModel: Stable End-to-End Joint-Embedding Predictive Architecture from Pixels

AI Lab: Mila, Université de Montréal, New York University, Samsung SAIL, Brown University

Summary: LeWorldModel (LeWM) is an end-to-end Joint Embedding Predictive Architecture (JEPA) that learns stable world models directly from raw pixels using only a next-embedding prediction loss and a Gaussian regularization term. Operating with just 15M parameters and minimal hyperparameter tuning, LeWM achieves competitive control task performance and plans up to 48x faster than foundation-model-based alternatives while demonstrating strong intuitive physical understanding.

🤖 AI Tech Releases

Gemini 3.1 Flash Live

Google released Gemini 3.1 Flash Live, a new audio and voice model.

Claude Computer Use

Anthropic unveiled a research preview of computer use capabilities for Claude Code and Claude Work.

Voxtral TTS

Mistral released Voxtral TTS, its first text to speech model.

📡AI News You Need to Know About

Deccan AI — $25M Series A Deccan AI, a post-training data and evaluation startup leveraging an India-based network of over 1M expert contributors, raised a $25M Series A led by A91 Partners to serve frontier AI labs like Google DeepMind and Snowflake. Source: TechCrunch (no company blog post found)

Harvey — $11B valuation Legal AI startup Harvey closed a $200M round co-led by GIC and Sequoia at an $11B valuation, bringing total funding past $1B with Sequoia having now co-led three rounds since the Series A.

Granola — $125M Series C AI meeting notetaker Granola raised $125M at a $1.5B valuation led by Index Ventures, 6x-ing its valuation in under a year as it expands from a prosumer notetaking app into an enterprise platform with team workspaces and APIs.

Kleiner Perkins — $3.5B new funds Kleiner Perkins raised $3.5B across two AI-focused funds ($1B early-stage KP22 and $2.5B growth fund KP Select IV), a 75% increase over its 2024 raise, fueled by returns from Figma’s IPO and early stakes in Anthropic, Harvey, and SpaceX.

Doss — $55M Series B Doss raised a $55M Series B co-led by Madrona and Premji Invest to build an AI-native inventory management layer that plugs into existing ERPs and accounting platforms rather than replacing them, targeting mid-market consumer brands.

Air Street Capital — $232M Fund III London-based Air Street Capital, led by solo GP Nathan Benaich, closed a $232M Fund III focused on early-stage AI companies across Europe and North America, becoming one of Europe’s largest solo VC funds with $400M AUM.

Periodic Labs — ~$7B valuation talks Periodic Labs, the materials-science AI startup founded by former OpenAI VP Liam Fedus and ex-DeepMind researcher Ekin Dogus Cubuk, is in early talks to raise at a ~$7B valuation, up from $1.3B at its $300M seed last September.

Anthropic wins injunction against Trump administration A federal judge ordered the Trump administration to rescind its designation of Anthropic as a “supply chain risk” and back off its order that federal agencies cut ties with the company, ruling the government’s actions violated the company’s free speech protections.

SoftBank secures $40B bridge loan for OpenAI SoftBank confirmed it has secured a $40 billion unsecured bridge loan maturing in March 2027, arranged with lenders including JPMorgan Chase, Goldman Sachs, Mizuho Bank, SMBC, and MUFG, to fund further investments in OpenAI and for general corporate purposes. U.S. News & World Report

Meta raises El Paso data center investment to $10B Meta announced it is increasing its investment in the El Paso data center from $1.5 billion to over $10 billion, which will support more than 300 permanent jobs and require over 4,000 construction workers at peak.