The Sequence Radar #820: GPT-5.4, Cursor, and the New Desktop

A week of massive developments in agentic AI.

Next Week in The Sequence:

Our series about world models dives into world models in the 4D space.

We deep dive into GPT 5.4.

In the opinion section we debate the highly controversial topic about the end of SaaS .

Subscribe and don’t miss out:

📝 Editorial: When AI Takes the Mouse: GPT-5.4, Cursor, and the New Desktop

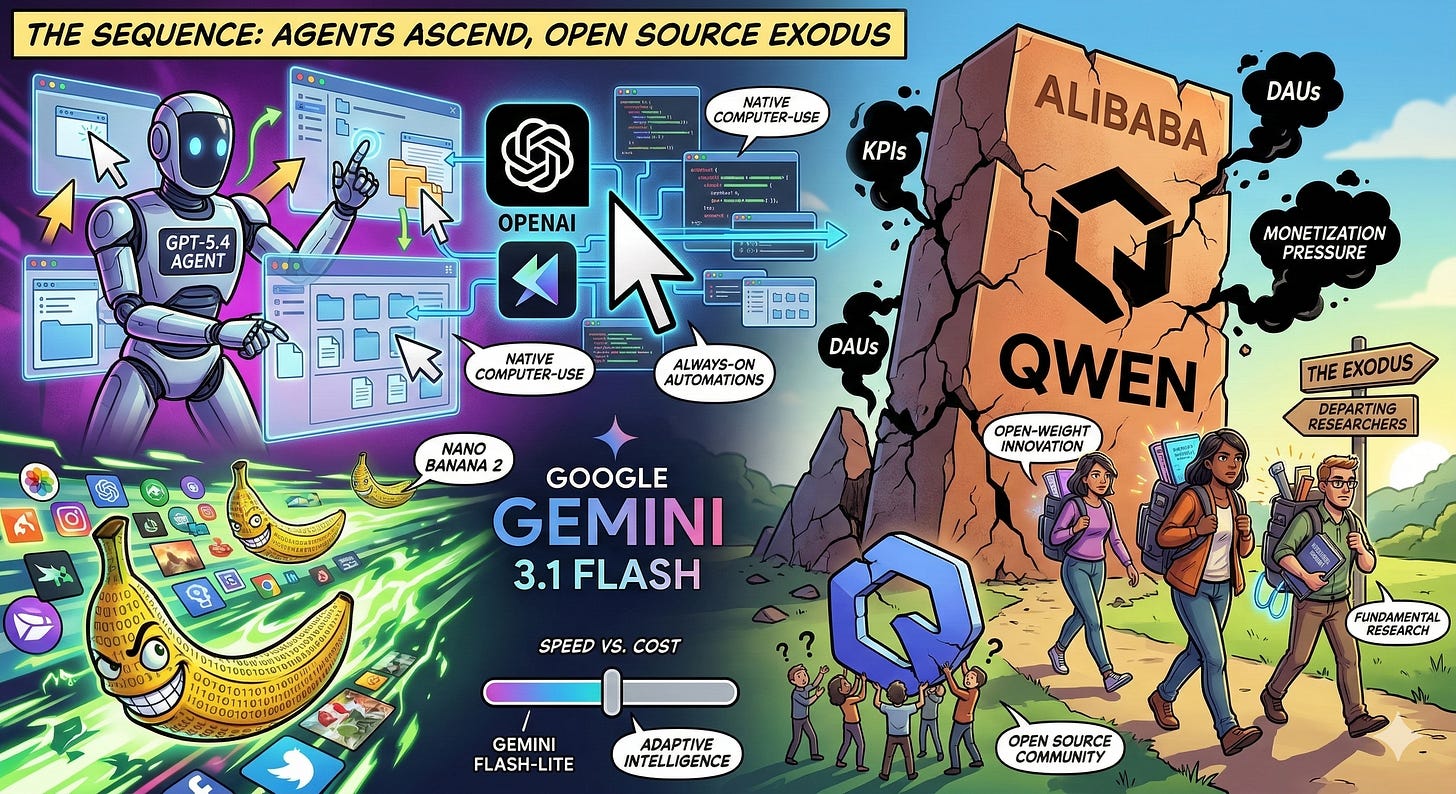

Welcome to this week’s edition of The Sequence. The contrast between algorithmic triumph and organizational fragility couldn’t be starker right now. While proprietary AI models are crossing the Rubicon into autonomous, always-on digital workers, the human engines driving open-weight breakthroughs are colliding head-first with corporate bureaucracy. Here is what you need to know about this week’s seismic shifts.

OpenAI & Cursor: The Agentic Desktop

OpenAI just redefined the frontier with the release of GPT-5.4 and its reasoning-focused counterpart, GPT-5.4 Thinking. The headline isn’t just better benchmarks; it’s native computer-use capability. GPT-5.4 can interact directly with software interfaces—interpreting screenshots, clicking, scrolling, and executing multi-step workflows across native desktop applications. Supporting a 1-million token context window, it represents a monumental step toward reliable agents capable of navigating the unstructured environments of modern knowledge work.

This agentic shift is equally explosive in the developer ecosystem. Cursor just unveiled Automations, a system designed to manage always-on coding agents. Instead of waiting for a developer’s prompt, Cursor’s agents are now triggered asynchronously by code changes, PagerDuty incidents, or scheduled timers. They operate autonomously in the background—reviewing pull requests, investigating server logs, and running exhaustive test suites. The paradigm is officially shifting from AI as an interactive copilot to AI as an asynchronous, parallel coworker.

Google’s Need for Speed: Gemini Flash

Google, meanwhile, is optimizing for sheer velocity and cost-efficiency. They launched Gemini 3.1 Flash-Lite in preview, delivering frontier-class reasoning at a fraction of the compute cost—ideal for high-volume, low-latency tasks.

Complementing this text model is the release of Nano Banana 2, officially designated as Gemini 3.1 Flash Image. Google has successfully mapped the high-speed intelligence of its Flash architecture onto visual generation. Nano Banana 2 merges the complex prompt adherence and precise text-rendering of previous “Pro” models with lightning-fast inference. Rolling out as the default across the Gemini app and Google Workspace, it allows developers to generate production-ready assets and execute rapid, iterative image editing at unparalleled speeds.

The Qwen Exodus: A Cautionary Tale

But while proprietary giants accelerate, the open-weight ecosystem just suffered a devastating blow. Less than 24 hours after releasing the highly praised, intelligence-dense Qwen 3.5 small models, Alibaba’s core AI team began to disintegrate. Technical lead Junyang Lin, alongside key researchers like Binyuan Hui and Kaixin Li, abruptly resigned.

Insiders report a textbook clash of paradigms: Alibaba is breaking up the vertically integrated research team into horizontally segmented, KPI-driven units desperate to boost Daily Active Users (DAUs). Replacing a core fundamental research mandate with metrics meant to aggressively monetize consumer chatbots has alienated the talent that drove Qwen past 600 million downloads. If Alibaba pivots fully to closed monetization, the community loses one of its most vital engines of open-source innovation.

🔎 AI Research

Beyond Language Modeling: An Exploration of Multimodal Pretraining

AI Lab: FAIR, Meta, and New York University

Summary: This paper investigates unified multimodal pretraining from scratch using the Transfusion framework to jointly learn language and vision capabilities. Key findings include the identification of Representation Autoencoders as an optimal visual representation and the discovery that vision is significantly more data-hungry than language.

Qwen3-Coder-Next Technical Report

AI Lab: Qwen Team (Alibaba)

Summary: The report introduces Qwen3-Coder-Next, an 80-billion-parameter Mixture-of-Experts model specialized for coding agents that activates only 3 billion parameters during inference. The model achieves strong performance on coding benchmarks by leveraging large-scale agentic training with verifiable tasks paired with executable environments.

DREAM: Where Visual Understanding Meets Text-to-Image Generation

AI Lab: MIT CSAIL and Meta AI

Summary: The researchers present DREAM, a unified framework that jointly optimizes discriminative and generative objectives to improve both visual understanding and text-to-image generation. The system utilizes a progressive masking schedule called Masking Warmup and a novel Semantically Aligned Decoding strategy to achieve high-fidelity image synthesis.

KARL: Knowledge Agents via Reinforcement Learning

AI Lab: Databricks AI Research

Summary: This paper introduces KARL, a system for training enterprise search agents using reinforcement learning to achieve state-of-the-art performance on complex, grounded reasoning tasks. By employing a multi-task off-policy RL paradigm and an agentic synthesis pipeline, the model demonstrates superior cost-efficiency and generalization across diverse search regimes compared to leading closed models.

Agentic Code Reasoning

AI Lab: Meta

Summary: This study explores “agentic code reasoning,” which allows LLM agents to navigate codebases and perform deep semantic analysis without executing any code. The authors introduce semi-formal reasoning, a structured prompting methodology that uses templates to significantly improve accuracy on tasks like patch equivalence and fault localization.

Bayesian Teaching Enables Probabilistic Reasoning in Large Language Models

AI Lab: Massachusetts Institute of Technology, Meta, Google DeepMind, and Google Research Summary: This research identifies that off-the-shelf large language models (LLMs) struggle with updating probabilistic beliefs during multi-round interactions, often plateauing after a single exchange. By using "Bayesian teaching"—fine-tuning LLMs to mimic the predictions of a normative Bayesian model—researchers significantly improved the models' ability to adapt to user preferences and demonstrated that these reasoning skills generalize to entirely new tasks.

🤖 AI Tech Releases

GPT-5.4

OpenAI released GPT-5.4 in ChatGPT, the API and Codex with incredibly impressive results.

Gemini 3.1 Flash-Lite

Google released Gemini 3.1 Flash-Lite, a version of its latest model optimized for high volume workloads.

Cursor Automations

Cursor unveiled Automations, its most aggresive agentic release to date.

Qwen 3.5 Small

Alibaba Qwen released a series of small versions or its new 3.5 model.

Phi-4 Reasoning Vision

Microsoft released Phi-4-reasoning-vision-15B, a multimodal reasoning vision model.

📡AI News You Need to Know About

Decagon completes first tender offer at $4.5B valuation— The AI customer support startup completed its first employee tender offer, allowing 300+ employees to sell vested shares at its latest $4.5B valuation (3x from $1.5B in June). Led by Coatue, Index, a16z, and others who also backed its recent $250M Series D. No separate press release found; the TechCrunch exclusive with CEO Jesse Zhang appears to be the primary source.

Cursor surpasses $2B in annualized revenue — The AI coding assistant’s revenue run rate reportedly doubled over three months, with corporate customers now accounting for roughly 60% of revenue. The disclosure appeared timed to counter viral skepticism about Cursor losing users to Claude Code.

Anthropic’s Claude experiences widespread outage — Claude.ai and Claude Code went down for thousands of users, primarily affecting login/logout paths, while the API remained operational. The outage followed a surge of new users after the Pentagon standoff pushed Claude to the top of the App Store.

OpenAI announces Pentagon deal with “technical safeguards” — Sam Altman announced that OpenAI’s new DoD contract includes prohibitions on domestic mass surveillance and human responsibility for the use of force, including autonomous weapons — the same red lines that Anthropic had fought for. Altman asked the Pentagon to offer these terms to all AI companies.

Pentagon officially designates Anthropic a supply chain risk; Claude still used in Iran — The DoD formally labeled Anthropic a supply chain risk, barring defense contractors from using Claude. Ironically, the Pentagon continues using Claude in its Iran military operations since it's too deeply embedded in classified networks to remove quickly. Anthropic plans to challenge the designation in court; Microsoft confirmed it will keep Claude available to non-defense customers.

Meta plans custom chips for AI training — CFO Susan Li said at the Morgan Stanley tech conference that Meta plans to develop in-house processors for training AI models, expanding beyond its current custom silicon for ranking and recommendation workloads. Meta has struck massive recent chip deals with Nvidia and AMD but views proprietary silicon as key for its unique workloads.

Alibaba’s Qwen tech lead Junyang Lin steps down — The architect of Alibaba’s Qwen AI model family unexpectedly resigned, posting a brief farewell on X, triggering a surge of community support and contributing to a 5.3% drop in Alibaba’s Hong Kong shares. He was the third senior Qwen executive to leave in 2026, following departures by the post-training head and the coding lead. The departures are reportedly tied to a corporate reorganization that dismantled the team’s autonomous structure.

Mistral AI launches AI services for finance — The French AI startup unveiled a suite of AI services aimed at banks and hedge funds that lets firms deploy tailored AI within their own infrastructure rather than giving up data control to a third party. Announced at Bloomberg Invest by CRO Marjorie Janiewicz.

Nvidia invests $4B in data center optics companies — Nvidia agreed to invest $2B each in Lumentum and Coherent in multiyear deals that include purchase commitments and capacity access rights for advanced laser and optical networking components. The investments will support U.S.-based manufacturing expansion and R&D for silicon photonics critical to scaling next-generation AI data centers.