The Sequence AI of the Week #855: Inside Nemotron Omni: NVIDIA’s New Multimodal Brain for Agents

The new member of the Nemotron family is an incredibly impressive release.

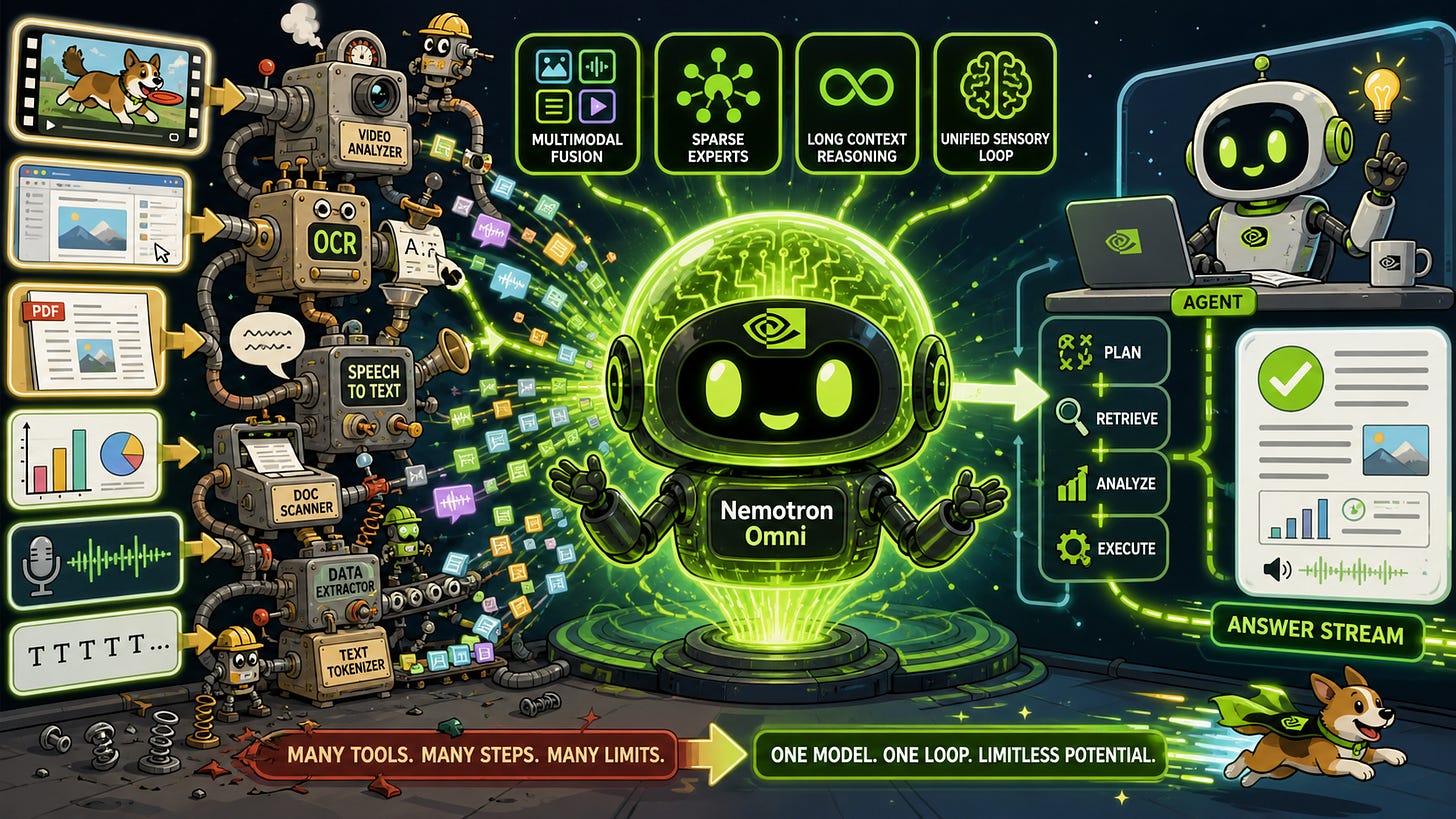

The interesting thing about NVIDIA’s new Nemotron 3 Nano Omni is not that it “does multimodality.” We already have a zoo of models that can caption images, transcribe speech, parse PDFs, answer questions about videos, and click around GUIs. The interesting thing is that Nemotron Omni is designed to make that zoo feel like a single animal.

Today’s multimodal agent stack often looks like a Rube Goldberg machine: audio goes to an ASR model, screenshots go to a VLM, PDFs are rendered into images or OCR’d into text, video gets sampled into frames, and then a language model tries to stitch the outputs together. Every boundary between models is a lossy compression step. The speech model may hear what was said but not what was on screen when it was said. The vision model may see the chart but not the voiceover. The planner gets a pile of summaries rather than a coherent sensory stream. Nemotron 3 Nano Omni is NVIDIA’s attempt to move the “eyes and ears” of an agent into a single efficient perception-and-reasoning model: video, audio, image, and text in; text out. NVIDIA announced it on April 28, 2026, positioning it as an open omni-modal reasoning model for agentic workflows like computer use, document intelligence, and long audio-video understanding.