The Sequence Opinion #827: Taming the Agentic Lobster: Learning from OpenClaw

PAtterns, lessons learned and best practices in new agentic architectures.

For the past few years, we’ve been treating Large Language Models (LLMs) as “brains in jars.” We type in a prompt, the brain generates a response, and then the universe resets. The model forgets you, your context, and your goals the moment you close the browser tab. This is fundamentally a stateless, passive interaction. But the trajectory of AI is moving from passive oracles to active, stateful agents. We are giving these brains hands, eyes, and most importantly, persistent memory.

If you’ve been paying attention to the GitHub trending charts or developer Twitter over the past two months, you’ve likely witnessed the meteoric rise of OpenClaw (originally known as Clawdbot). Created by Austrian vibe-coder Peter Steinberger—who recently took these ideas with him to OpenAI—OpenClaw has rapidly become the defining architecture for local, autonomous AI agents.

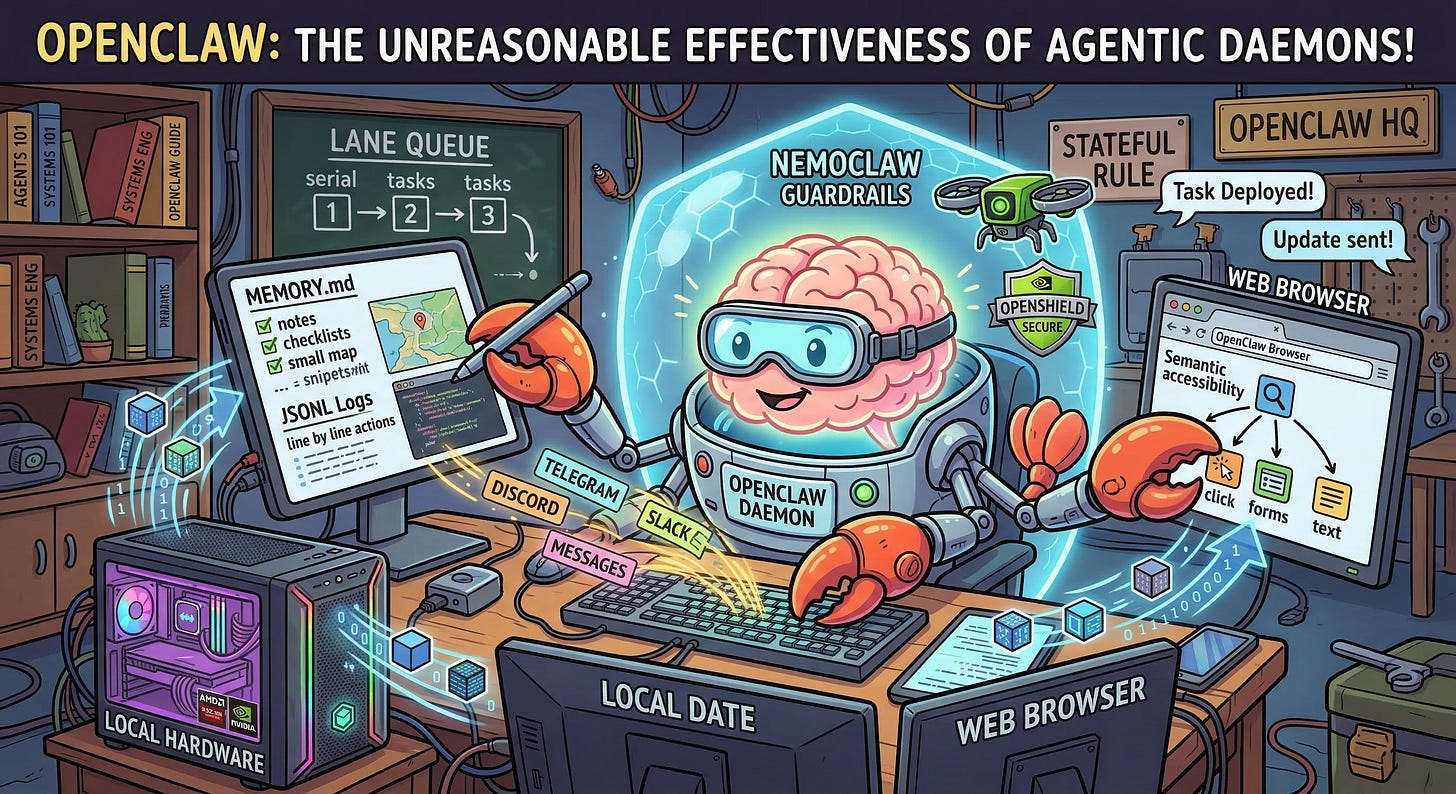

But what actually is it? OpenClaw isn’t a new foundation model. It’s an open-source orchestration layer—a daemon that lives on your hardware, connects to an LLM, and executes workflows across your messaging apps, local file system, and the web.

Let’s dive under the hood and deconstruct the OpenClaw architecture. Once you understand how OpenClaw works, you understand the blueprint for how all production-grade agentic systems will operate.