The Sequence Knowledge #858: How State Space Models Went from Curiosity to Serious Transformer Competitor

Inside the core ideas, potential and challenges of SSMs

💡 AI Concept of the Day: How State Space Models Went from Curiosity to Serious Transformer Competitor

There is this thing that happens in ML research where a line of work gets quietly good for years, and then one day you wake up and it’s suddenly competing with the dominant paradigm. State space models are having that moment right now.

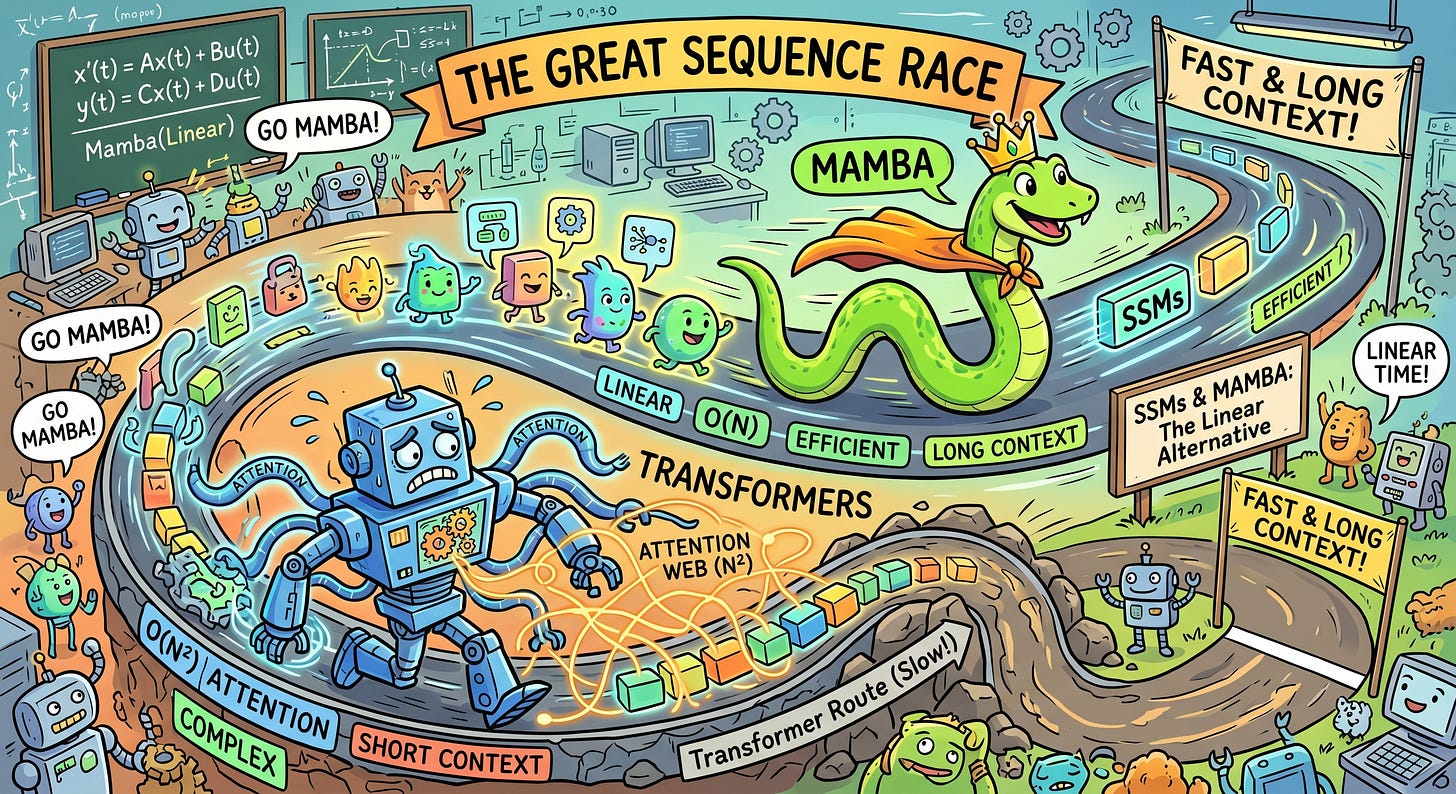

For the past eight years, the transformer has been the only architecture that matters. Self-attention, key-value caches, next-token prediction — it’s all we think about. And for good reason: the thing works. But transformers have a problem that everyone in the field knows about and nobody has fully solved. Self-attention is O(n²) in sequence length. That’s not a theoretical concern anymore. When you’re trying to push context windows past a million tokens, or when you’re running inference on a 70B model and your KV-cache alone is eating 40GB of VRAM, quadratic scaling stops being an academic footnote and starts being the actual engineering bottleneck.

State space models offer a fundamentally different contract: linear time complexity, constant memory at inference, and no KV-cache at all. The question for the last three years has been whether they can match transformers on the things that matter — language modeling perplexity, in-context learning, reasoning. As of March 2026, the answer is: increasingly, yes.

Let me walk you through how we got here.