The Sequence Knowledge #838: Project GENIE: Building Playable Worlds from Pixels

Google DeepMind's Project GENIE is one of the most ambitious releases in world models.

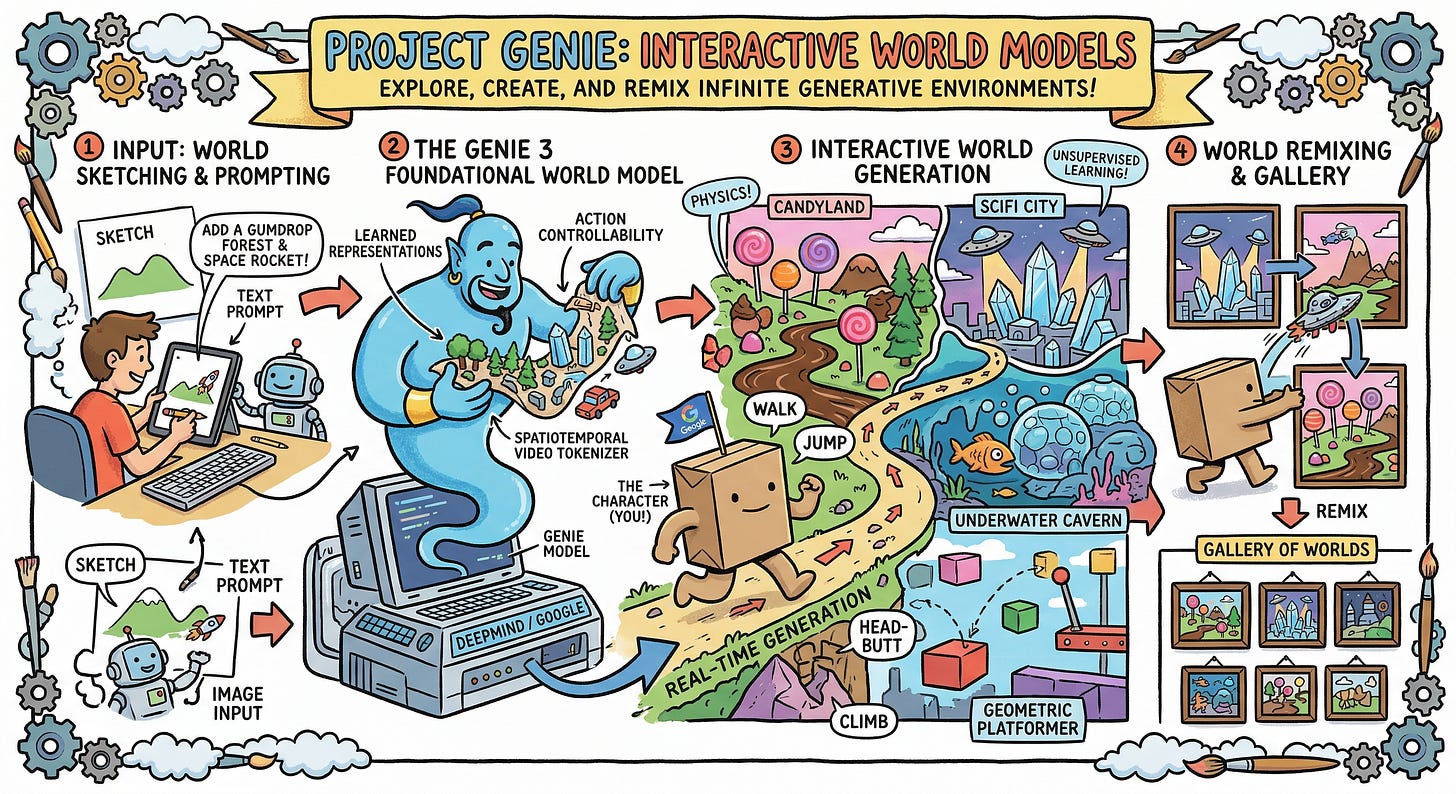

💡 AI Concept of the Day: Project GENIE: Building Playable Worlds from Pixels

I’ve spent a lot of time recently thinking about the “Simulator” hypothesis. In the early days of Large Language Models, we mostly treated them as fancy autocomplete. But as we scaled from GPT-2 to GPT-4, it became clear that to predict the next token accurately, the model had to build a robust internal representation of the world—a world model. If the text says, “The apple fell from the,” the model doesn’t just look for statistical neighbors; it effectively simulates gravity, tree height, and Newtonian physics to reliably output “tree.”

But there is a bandwidth problem. Text is a low-fidelity compression of human knowledge. It is a “keyhole” through which we view reality. If we want to reach the next stage of intelligence, we have to move toward high-bandwidth streams: Video.

Project Genie represents a fundamental shift in this direction. It is a Generative Interactive Environment (GIE). It is not a video generator in the sense of a digital canvas (like Sora); it is a foundation model for agency. It represents the transition from AI that talks to AI that simulates. It is the difference between watching a film of a maze and being the mouse inside it, where the walls are being hallucinated into existence by a Transformer in real-time based on your every move.

1. The Architecture: Tokenizing Reality