🏋️♂️🤼♀️ Edge#246: OpenAI Used These Best Practices to Mitigate Risks While Training DALL-E 2

Preventing toxic content, reducing bias and memorization have been some of the main challenges faced by the DALL-E 2 team

On Thursdays, we dive deep into one of the freshest research papers or technology frameworks that is worth your attention. Our goal is to keep you up to date with new developments in AI to complement the concepts we debate in other editions of our newsletter.

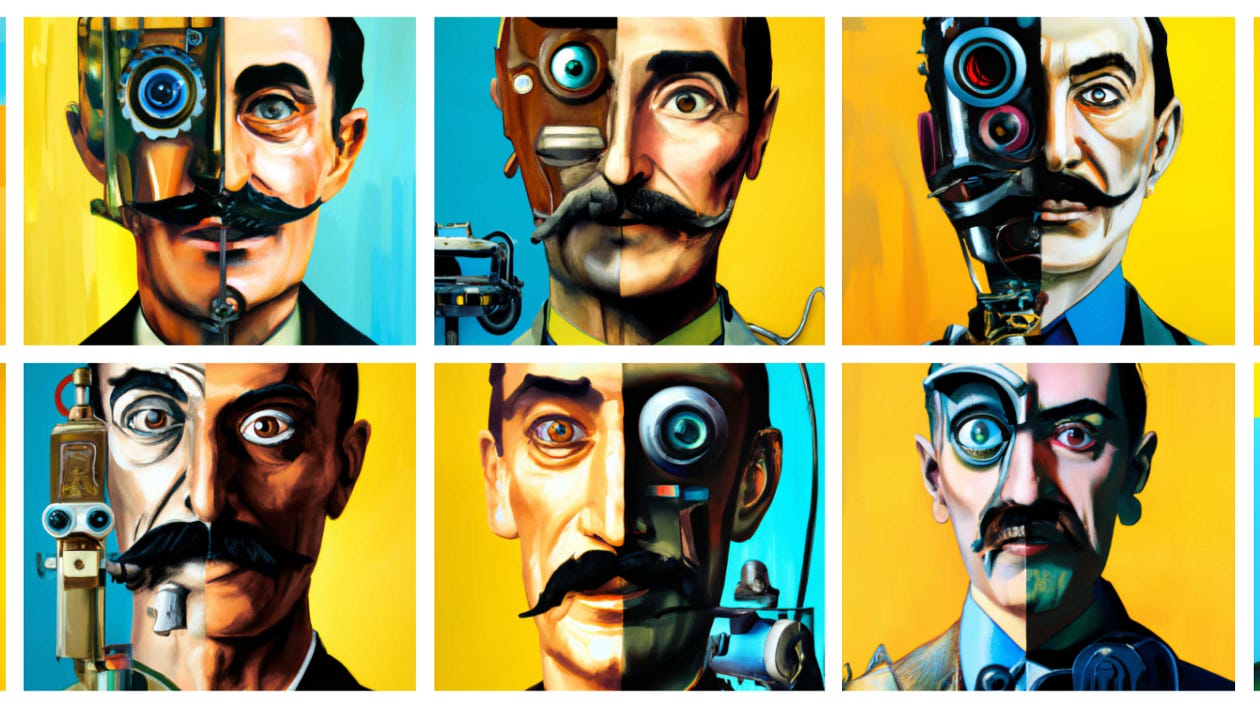

💥 What’s New in AI: OpenAI Used These Best Practices to Mitigate Risks While Training DALL-E 2

The deep learning space is experiencing a small innovation in the area of text-to-Image synthesis. Models such as OpenAI’s DALL-E 2, Midjourney, Meta’s Make-A-Scene, Stable Diffusion and Google’s Imagen have been pushing the boundaries of image generation based on textual inputs. Beyond the breathtaking capabilities exhibited by these models, they pose major ethical risks as they can be used to generate fake, abusive, unethical or racist content. This is the main reason while companies like OpenAI, Meta or Google haven’t yet released full implementations of these models. How can we established safety rails around the pretraining and inference process of text-to-image generation models. In the case of OpenAI, the company recently discussed some of the best practices used to mitigate risk in DALL-E 2.

OpenAI’s approach to risk mitigation with DALL-E 2 can be summarized in three fundamental areas: